In-Group Bias in the Indian Judiciary: Evidence from 5 Million Criminal Cases

(English captions & Hindi subtitles available)

About the Seminar:

Paul Novosad studies judicial in-group bias in Indian criminal courts, collecting data on over 5 million criminal case records from 2010–2018. He exploits quasi-random assignment of cases to judges to examine whether defendant outcomes are affected by assignment to a judge with a similar identity. He estimates tight zero effects of in-group bias along gender and religious identity and finds limited in-group bias in some (but not all) settings where identity is particularly salient, but even here, his confidence intervals reject effect sizes smaller than those in much of the prior literature.

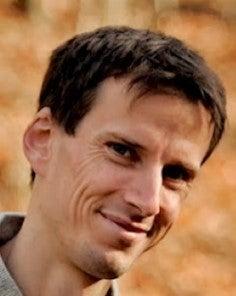

About the Speaker:

Paul Novosad is an Associate Professor of Economics at Dartmouth College. His research examines why poor countries have remained poor for so long, and what policy interventions can help improve people's lives in developing countries. His team builds new open source economic data from information age sources like satellites and private and government sector data exhaust.

Some of his recent projects have focused on judicial bias in India, the impacts of India's large-scale rural roads program, the impacts of mineral sector development, and on measuring intergenerational mobility in developing countries. He also has several projects on machine learning and statistical methods with applications to the U.S. mortality crisis.

He is a founder of Development Data Lab and a creator of The SHRUG - an open data platform for socioeconomic research in India, along with Sam Asher and Toby Lunt.

FULL TRANSCRIPT:

Naveen Bharathi:

Today, we have Professor Paul Novosad from Dartmouth College. My name is Naveen Bharathi. I'm a Postdoctoral Research Fellow at CASI, and along with my colleague, Nafis Hasan, moderate this seminar series.

Today, Professor Paul Novosad will present his work on judicial bias in India, and today's talk is in partnership with South Asia Center at UPenn. Professor Novosad's research examines why poor countries have remained poor for so long, and what policy interventions can help improve people's lives in developing countries.

Some of his recent projects have focused on impacts of India's large scale rural roads program, impacts of mineral sector development on measuring intergenerational mobility in developing countries. He has also had several projects on machine learning and statistical methods with application to the US mortality crisis.

He also works on residential segregation in India. He's also the founder of Development Data Lab and creator of SHRUG, an open data platform for socioeconomic research in India, along with Sam Asher and Toby Lunt.

Today, Paul Novosad is going to present on judicial bias, In-Group Bias in Indian criminal courts, collecting data on over five million criminal case records from 2010 to 2018. The research explores quasi-random assignment of cases to judges, to examine whether defendant outcomes are affected by assignment to a judge with a similar identity. The paper is [inaudible 00:01:35] of evidence of In-Group Bias on gender and religious identity and finds limited In-Group Bias in some settings, where identity is particularly salient.

But even there, quantitative intervals affect sizes smaller than those in much of the prior literature. Before I hand over the mic to Paul, next week, we have a book talk by Professor Malini Sur, on Jungle Passports: Fences, Mobility and Citizenship at Northeast India and Bangladesh Border.

Like before, I just want to remind you that we are going to have a similar format like the last time. If you have any questions, please send them directly to me in the chat box, and I will call on to you to pose your questions, and to the presenter.

Please keep your questions brief, and to the point, so that we can get to as many questions as possible, and apologies in advance if I can't get to everyone. And please use the chat box only for questions.

Finally, please be mindful about muting your mic throughout the duration of the event. Also, please remember that you cannot record this presentation without the permission of CASI, or from the presenter. Once again, thank you for your interest, and for being here today, much appreciated, and happy Diwali.

With that, I'm going to hand over the mic to Professor Paul Novosad. Thanks, Paul, please go ahead, and happy to host you.

Paul Novosad:

Great. Well, thank you again, for hosting me here. It's always a pleasure to present at CASI. I've enjoyed the few times that I've been to CASI, and gotten to interact with the people across many different disciplines there. I think it's just a really phenomenal, phenomenal research group.

Thank you for that nice introduction. Naveen already has said a lot about the big idea of this paper, so that's going to lead me to skip some parts, I'm going to be flipping through this presentation, and showing you maybe some highlights, given just a half hour to go through. There's a lot more in this paper.

This is a big research team working together on this paper. And it's kind of took a whole lot of people and a whole lot of different skills to just create this dataset, which we're just starting to work with. This is our first paper coming out of a lot of work to scrape the e-Courts data from India, and hopefully, the first of many more.

The basic motivating facts of this paper are that Muslims and women in India have unequal access to economic opportunities. There's a lot of evidence of that from various sources.

It's particularly interesting, we think, to study unequal access to the judicial system, because the idea of the judicial system is supposed to be a mechanism of government through which you can receive fair treatment, even if you are discriminated against in other settings. So if we find unequal treatment in the judicial system, that creates a significant problem, because that's as far as the law can take you, in terms of protecting you from discrimination.

There's a lot of evidence on bias in criminal justice systems in the US, and in rich countries, and considerably less in developing countries, including India. So this is not meant to be a comprehensive study on judicial bias in India. I'm going to talk specifically about the narrow question we're trying to ask. We're focusing on In-Group Bias by religion and gender in the Indian Judiciary.

Both of these are kind of narrowing the scope. In-Group Bias is one type of bias, religion and gender is one type of group identification. That's what we're looking at now, and there's a lot more to do here, beyond what we have done.

So you won't be surprised to hear that Muslims and women are underrepresented in the Indian Judiciary. Only 4% of High Court judges in India are Muslim, compared to about 15% of the population. And 28% of district court judges are women, and yet a disproportionate share of under trial prisoners are Muslims. So there's these disproportionate ratios, which we think if there's In-Group Bias in the Judiciary, this will further balance the system against Muslims and women, creating population level discrimination.

We collected records from 80 million cases, close to the universe of cases heard in India's lower courts from 2015 to 2018, and something less than the universe for the five years before that. We used machine learning to classify judge and defendant names by religion and gender.

Then we used the exogenous random, it's not random. It's exogenous, as if random assignment of judges to defendants, to look at In-Group Bias. In other words, we're interested in knowing when a Muslim is placed before a random judge in a criminal court, does his or her outcome differ, depending on whether that judge is a Muslim?

Do you get a better outcome when your judge matches your broad identity group? And the literature suggests that in a lot of settings, you do. In this setting, we found that you don't.

We obtained very tight zero estimates of In-Group Bias on gender and religion. And I'll show you that we can rule out effect sizes much smaller than those that are being estimated by other studies.

We do find some evidence of bias, when we drill down in and focus on more narrow social groups, or on specific contexts that activate identity. Even here, it's only happens in a subset of cases for a much smaller minority of the population than you would think of, if thinking about just women or Muslims. And I'll talk toward at the end about some ideas on how to think about those findings.

Again, we're focusing on one form of bias in one part of the judicial system. So there are many stages through which a case enters the judicial system. Some kind of allegation is made, police choose whether or not to investigate it, police can make a decision on whether to file a charge, prosecutors can make certain types of arguments. Finally, given all this information, judges rule on the case.

There's a lot of selection that takes place before we even see cases arrive in this e-Courts dataset. So there's no sense in which our results can tell you that there's not bias in the Indian judicial system, we're only observing one component of it.

Second of all, we're only looking at one type of bias, albeit a type of bias which has been widely identified in other settings. But there could be other forms of bias that we're not well suited to measure in this context, or even trying to measure them in this context.

For instance, it could be that all judges discriminate against Muslims, or all judges discriminate against women. We're not going to pick that up in our study, because we're only looking at have the identity of the judge affects your outcome. Again, we think this is a relevant and interesting topic to study, given the prior literature, that it is not a comprehensive assessment of bias in the judicial system.

Our data source is the Indian e-Courts platform, at ecourts.gov.in. This is a platform through which the Indian government has been gradually digitizing all of the district courts, and you have this kind of metadata on every single case, including the nature of the disposal, and some kind of assignment to a judge. And you can follow this case from the minute it enters the judicial system, until it exits.

The scope of the data is the lower judiciary, District and Sessions Courts, and subordinate courts across all districts in India. I think we have about, I'll show you on the next slide, what the count is. It's about 7,500 trial courts and 80,000 judge records.

This is the entry point of all criminal cases in India, and most of these cases will not rise to a higher level. We're focusing on criminal cases, there's about eight million of these. It's much easier to describe the outcome in a criminal case, than it is in a civil case, where you don't have a simple acquittal or conviction decision.

You have some kind of settlement that's being made. So criminal cases, we focus on these, because it's just a little bit easier in these cases to determine whether an outcome is desirable or undesirable for the defendant.

We don't have demographic data. We don't have gender and religion, or caste information in the data, but we do have individuals' names. So what we did in this paper is, we designed a neural network to classify names to gender and religion. I know some other people in Zoom have done similar things.

This is our first time doing this with a neural network approach, and it was pretty neat. We've done most of our matching beforehand, with fuzzy matching, but that doesn't work very well for assigning religion to names.

These two examples here give you an example of two pairs of names, Khan and Khanna. The first is distinctly Muslim, the second is distinctly non-Muslim. A fuzzy match will find these two names to be very, very close, whereas the fuzzy match will find these two spellings of Muhammad to be very different, but in fact, these names are very close.

The neural network just does a much better job. It looks at pairs of characters, or subsets of characters at a time, walking forward to the name, walking backwards through the name, it can do a little bit better at understanding context, and you really got a remarkable accuracy. 97% out of a sample match on a manual classified held out a test set of names.

So this is a neat process to go through, and we were all surprised by just how effective this could be. But we're very confident in the classification of names that come out of this.

Okay, so we're going to classify litigants and judges as male or female, and as Muslim or non-Muslim. Obviously, religion in particular is a group that can be desegregated. Further, we didn't have a lot of success trying to identify India as other religions, Jains, Buddhists, Christians, who occupy less than 10% of the population.

They're not very well represented among judges, and the names are not as clearly distinctive, whereas Muslim names are particularly distinctive. And Muslims, I think, are a group that's particularly interesting to study in India right now, given the rise in communalism, and changing attitudes toward Muslims. Let me skip where the training data came from, for that.

All this data, all this judicial data is now open to the public. We decided that we should just post it, and make it available to everyone, rather than waiting for a paper to get published, because that is, as you know, takes endless amounts of time for papers to get published in economics.

This is all up there, 80 million cases, not just the criminal cases. A lot of people have gotten in touch with us who are using this data, studying really interesting topics, that we would never have thought of studying before, environmental law, sedition law, lots of really neat things going on.

It's definitely been a very positive experience to post quality data early, rather than trying to hoard it for future projects. So we're very happy to have this worked out, very strongly recommend doing something similar. And if you're interested in these data, this link can get you to them.

Okay, these are the charge rates and the acquittal rates for women relative to men in the data. So you can see that women get charged.

This is your likelihood of appearing in our data, divided by your likelihood of appearing in the population. You can see that women are disproportionately less likely to be charged with a whole range of crimes, and then, conditional on being charged, they're much more likely to be acquitted.

This slide is motivation for needing some kind of better identification strategy for thinking about judicial bias than just looking at outcomes overall. It would be probably incorrect to look at these data and to say, "Oh, the judicial system is unfair to men, and too kind to women, because not enough men are being charged, and not enough women are being charged."

We think that it's likely that the rate of commission of many of these crimes is much lower for women than for men, so we can produce a similar graph For Muslims. It's more ambiguous.

There are higher charge rates across a range of crimes, particularly around marriage offenses, which we think is probably an interesting thing to look into, variations in the acquittal rate. But this by itself isn't telling you very much about bias, because we don't observe those earlier stages of how often new charges get leveled, how often charges get investigated, how solid a case was made in the court, who had access to attorneys, and so on.

Instead, what we'll do is focus on one specific type of bias, which is thinking about how the identity of the judge affects the case outcome that you get. We're relying on an assumption that cases are as good as randomly assigned to judges. So cases are assigned to judges, criminal cases are assigned to judges, following a very clear set of rules.

There's a mapping of police station and charges to courthouses and rooms in that courthouse. So if you are charged with larceny in Police Station A in some town, then that set of police station times charge directly maps onto a specific courtroom. There's no discretion there.

Then judges circulate through those courtrooms. A judge will stay in a given court for about three years. But during those three years, there's a rotation around the courtrooms. They spend about three months at a time in a given courtroom. And it takes two to three months for a case to get from the first information report filed by police to the court.

So it would be very hard to even know which courtroom you're being assigned to, and it'd be very hard to get the timing right to get a specific judge. This is effectively giving you an arbitrary judge. In fact, forum shopping, meaning, trying to select a judge who you think is going to be friendly to your case, this is prohibited by the law, and according to all the legal scholars, and actually lawyers we've talked to, is not practiced.

We think that the assumption of random assignment, conditional on court time and charge fixed effects, is valid. I can show you a balance table here, which again suggests that female and Muslim defendants are no more likely to be assigned to female and Muslim judges than non-female and non-Muslim judges.

This is our estimating equation for thinking about In-Group Bias. And this is a standard estimating equation in the literature. Your Outcome Y, for Individual I, in Court C, at Time T, think of this as a binary outcome for acquittal, that's what I'm going to show you here, depends on whether your judge is male, whether you are male, and then the interaction of your judge being male, and you being male.

Whether you are male is something that's not randomly assigned, so we're going to estimate beta two, but it doesn't have a causal interpretation. But beta one and beta three do have causal interpretations.

Beta one is the effect of a female judge, the effect on a female defendant's rate of acquittal, the marginal effect of her being assigned to a male judge, rather than a female judge. Beta one plus beta three is the same effect, but on a male defendant, then the difference between these two coefficients, that's beta three, the interaction term, that's the In-Group Bias.

Then we're always controlling for short time fixed effects, and a range of other covariates, including charge fixed effects. So it's maybe easiest to see if we just go to look at the results.

On each of these tables, I'm going to show you beta one in the first row, beta three in the second, sorry, beta one plus beta three in the second row, and beta three in the third row. So what this -0.008 tells you is that if you're a female defendant, being randomly assigned to a male judge lowers your acquittal rate by about 0.8 percentage points, on a baseline acquittal rate of around 20%.

That suggests that female defendants are worse off when they're being assigned to male judges. But then the coefficient for male defendants is almost exactly the same. Male defendants are also worse off when they're assigned to male judges. The own gender bias is the difference between these two coefficients, which is a very precisely estimated zero across all our specifications, including a judge fixed effects specification, which is kind of the most constraining specification that there is.

And we run a similar estimation, looking at Muslims, again, the own religious group bias is extremely small, and these standard errors are tiny, right? We're able to rule out a bias of about half a percentage point on a baseline acquittal rate of 18%. So we're able to rule out really small amounts of bias in these estimates.

Now, it's natural to be, "This is India, so why are you only looking at gender and religion? It seems like caste would be worth looking at, as well." We totally agree.

It's harder to look at caste, one, because cast is more complex, it's hierarchical. So it's not clear at what level, whether it's Jati, or a broad caste group that's going to matter, or even a more narrow familial social group relationship.

It's also much harder to classify caste on the basis of names. So we don't have a good name to caste correspondence yet. That's something that we're working on.

We're interested in this question. We want to get some kind of proxy for this. So we use something that's been done in the literature by [Ray 00:19:46] and Vig and others. And we create a binary variable that indicates that the judge and defendant share a family name. This is correlated with sharing caste, but it's an imperfect proxy.

For instance, it incorporates religion, as well as caste. So two people with the same religion are more likely to share the same last name, even if they're not of the same caste.

Some groups are overly aggregated. You can think of the Singhs and the Kumars. If two individuals are Singhs, or two individuals are Kumars, we may not be learning that much about caste similarity, and some groups may be overly disaggregated.

In other words, two individuals may have different last names, and they may have the same caste. Nevertheless, this is the best idea we had, given the data that's available. So these results are a little bit nuanced.

Columns One and Two are just our full sample estimates of the effect of having the same last name. We have judge fixed effects in, I guess, Columns Two and Four, on judge fixed effects.

We have last name group fixed effects in these regressions, as well. The estimates are really small, really close to zero. This one is a tiny bit negative, but in terms of magnitude, it's really, really small.

On average, we find no bias. The average individual going into a courtroom, the average treatment effect for that individual of being matched to a judge with the same last name, is a fairly precise zero, or is even weakly negative, which would mean you would be very marginally worse off if your judge has the same last name as you.

However, these estimates are driven by large groups, right? If you think about the Singhs, for example, I don't know the exact number, but they're the number in the tens of millions. So very common groups are going to be mostly represented.

What these columns, One and Two, are telling you that on average, for groups with very common names, you don't see a lot of In-Group Bias. For Columns Three and Four, we're going to weight these estimates by the inverse of the group size. So Columns Three and Four, you can think of each last name group is one observation, or is equivalent to one observation.

We see that, when we do that, we do find a small amount of In-Group Bias, in that being in a group, at the group level, being assigned to a judge with the same last name as you, does raise your acquittal rate by about 1.3 percentage points. This is a little bit less than 10%. This is about a seven or 8% effect. So it's not irrelevant.

This a substantial effect, but it's affecting a minority of the population, we have a another regression, which, I started regenerating this table at 11:30. I was hoping to get you the new table, but I don't have the new table quite yet.

But what we do is, we do the same type of regression, but we interact the same last name with the size of the group. And we show that the same last name only matters if your group size is small. That kind of makes sense, the more informative the last name is, the more likely it is to matter for your acquittal rate.

Okay, finally, we're interested in looking at some contexts that activate bias. As we all know, identity is a fluid concept, and everybody has many overlapping identities, and context can make one identity particularly more salient than the others.

We look at three particular cases where we think identity may be more salient, one where victim identity is opposite to the defendant. So maybe when Muslims are charged with crimes against non-Muslims, then judge religious identity gets activated, whereas when Muslims are charged with crimes against other Muslims, the identity may not be activated.

Similar tests, we look at the gender of judges ruling on crimes against women. And finally, we look at the randomly varying over the course of the year cycle of Ramadan, to ask whether religious bias gets activated during Ramadan. So I will go through these relatively quickly.

Perhaps rather than explaining the interaction tables, I'll just highlight the coefficient of interest. Mismatch between victim and defendant does not seem to matter for gender. That's the interaction effect of victim mismatch, nor does it matter for religion.

However, when we look at the own religious bias for the Ramadan interaction, we do find our estimates are getting a little bit noisier here, because Ramadan is only one month of the year, and it occurs in the summer, during our sample, when the case count is already lower. So we do find that own religious bias does seem to bite a little bit more during Ramadan.

But again, this is a small ... It's less than 12 of all cases, because there are fewer cases being observed in the summer in our period. If we had a longer sample period, we could control for seasonal effects, as well. We can't do that, because we only have an eight-year sample, where Ramadan is mostly falling at the same time of year.

To conclude, we found little evidence of large scale judicial In-Group Bias against women and Muslims, despite anecdotal evidence of significant, and not just anecdotal, in fact, I'll show you a few studies. Hopefully, I have done here in a couple of slides. Yes, I do.

So more than anecdotal, despite other research evidence of bias toward women and Muslims in other contexts of society, and despite this very specific form of judicial In-Group Bias being found in almost all other papers on the topic. We did find bias in some areas where identity is particularly salient, in particular, when groups are very small, or religious bias during Ramadan. Even here, it was relatively small in magnitude compared to prior studies.

In-group effects like this do appear in other Indian settings. Just to give a few examples, lender-borrower relationships in Fisman, Paravisini and Vig, they find that loan officers give larger loans and repayment rates are higher, when the loan officer and the borrower have the same last name. So it's using exactly the same identification we're using in the coste analysis, and they find a significant effect on the ability to give loans, and have them be repaid.

Sharan and Kumar find that the alignment between the ward politician and the GP politician affects the delivery of public services. In particular, public services are less likely to be delivered when the wad officer is a non-SC, but the GP leader is an SC.

Then Joey Neggers has his really nice paper showing that the identity of polling officers affects how well votes are counted. So minority votes may be less likely to be counted, may be more likely to be rejected, when there are no minority polling officers there.

While it does seem like this kind of bias is active in Indian society, it won't be surprising to hear that that's the case. Why didn't we find it in our context? One explanation that fits well, with the empirics we found on caste, is that group size may be important, maybe multiply that by the economic distance between judges and litigants. So you have many aspects of your identity.

Perhaps when you are a Muslim judge, a member of group size of 200 million, and you're an upper middle-class individual, and you're looking at a defendant who's a lower-class Muslim charged with a crime, perhaps religious similarity isn't the first level of identity that gets triggered.

Maybe high status, low status is the first level of identity that gets triggered, or cop/robber type of identity, right? So there's competing claims on your identity. And maybe these groups, looking at all Muslims and all women in this judicial context, are just too large. Indeed, when we look at smaller groups, we find that identity seems to matter a little bit more.

But we can't rule out other possibilities. There's, I think, mostly anecdotal evidence that the court may function as a marketplace, in that certainly, you can hear many stories of judges taking payments to deliver verdicts. In a pure marketplace, the judge may just deliver the verdict to whoever's willing to pay the most, and identity may play less of a role in that context.

Finally, I'm going to show you some evidence that the default hypothesis with which we approach this paper, that there should be very large In-Group Bias in this setting, maybe overstated, due to another form of publication bias, is another form of bias, that being publication bias in the literature. So this is a simulated example, to show you how a funnel plot works, because these aren't necessarily that intuitive to read.

I've simulated a series of experiments on a treatment program that has a true effect size of about 0.08. So around here, where my mouse cursor is going down, this is the true effect size. If you were to run a test of this experiment, if you have high statistical power, and very low standard error on the left axis, you'd get a pretty precise estimate of about 0.08.

The lower your statistical power is, the more diffuse your estimates are, but they're still centered around this true effect size of 0.08. But you'll notice that all these estimates outside the brown line are statistically significant, whereas the ones inside the cone are not statistically significant.

So if we now have a publication bias process, where no estimates are difficult to publish, then you can get a distribution of effect sizes in the literature that looks something like this, right? None of the papers in here got published, so what we see in the literature are all these papers, and if you were just to take the mean of the published points, you'd arrive at a much higher estimate of bias.

In fact, the more noisy your estimates are, the more bias you would arrive at, because you're, again, filtering out all the estimates that have no effects. What we've done is we've gone through the literature, and we found every study that we could find, that exploited a randomized assignment of defendants to judges, and looked at identity matches, like basically, the exact same study design as us.

Our data points are the red points on this graph, and the prior studies in the literature are the black points on this graph. So there's not enough sample here to run the publication bias test. But visual inspection of this graph strongly suggests that you do not see a cone-shaped set of estimates. It seems like the weaker your precision, the higher the estimates of judicial bias that you're getting.

Even the only negative estimate of bias is kind of way outside here to the left, again, outside this cone of statistical significance. So I take this as fairly compelling evidence that there's significant publication bias in this literature.

It's not to say that these studies are wrong. But if you can imagine what the true distribution of data looks like, maybe there's some kind of cone of data points, maybe centered around a smaller In-Group Bias effect, than the ones that are most talked about in the literature.

So I think that's my 30 minutes. Let me just conclude with some topics that we are thinking about in further studies. And I think this, as I said, is a starting point, and by no means, a comprehensive statement about bias in the Indian Judiciary.

We're trying to get data from police stations to think about bias in early stages, earlier stages of the criminal justice process. Also interested in looking at bias in higher court, where judges' discretion may be greater. There's a smaller number of cases, but the text is much more accessible, so there's a lot of interesting stuff that can be done here.

Now, obviously, going deeper on the caste and income dimension is something that we're continuing to think about. So why don't I leave it there? I'll stop the screen share, and would be excited to hear people's comments and questions.

Naveen Bharathi:

Thanks. Thanks for doing such a fascinating ... I think I have not seen anything on these lines, actually. So I'm also kind of thinking about this. I have a few questions, but I'll ask them later. But [Tariq 00:32:39], do you want to go first and ask?

Tariq Thachil:

Sure. Yeah, I can go first. Paul, thanks so much for that fascinating presentation. As always with your papers, I mean, the amount of data work involved is just incredible. Also, it's just amazing that you guys have made this a public good and opened up a much needed research agenda on this topic.

I think it's really fantastic. I'm also excited to see all these ideas that you guys have been hearing about, that people are trying to work with, with your data, seeing the mushrooming of papers on it, and makes me think we should do a panel at some point, even, at CASI, to see different uses of this data and what people are finding, and what people think is interesting to work on, on this topic.

So, a couple of questions, one, just very narrowly, I'm just curious how you guys did the sample match validation process? I mean, it was amazing to see that success rate of 97%?. I'm just curious, just narrowly, what that process looked like.

The slightly broader question I was thinking about, as you guys were at the end, kind of activity thinking about, "What's going on here, in terms of it, the larger context of the Indian judicial system?" And I was wondering, are there particular features, macro features of the Indian judicial system, that actually almost mitigate against this form of bias?

One was, I was thinking about, is the sheer volume of cases and the kind of understaffing? I mean, that kind of lack of judicial capacity, there was just a report, I think, that came out of just the vacancy rates for judges, especially in lower courts, and just the high rate of vacancies in high court and lower judges, and even more in district courts.

We all hear these stories that get reported in the press from time to time, and the long wait times, people are under trials, just waiting and waiting for ... So I'm wondering if that kind of pressure on existing judges and assignments, in some ways, I'm just trying to think of the pressure points in a system, where there's that much volume and that much lack of capacity.

If you guys think that that actually, in some ways, weirdly goes against ... I mean, just thinking of how these cases are often adjudicated. You can sit in here, like the way that they're just kind of processed, that it actually goes against anything that requires information, even just matching and bias.

Whether that's something that's going on, or whether there are other structural features of, especially the lower courts in India, that make you think, that actually the requirements for bias are in a lot of these other examples that you're using, where we find own group bias, they're more intimate relations, or they're often relations where the scale is a bit smaller. So it's a bit easier for the information and actually acting on the information to happen.

But the second was really thinking about class. I think you guys are exactly right. I know that doesn't really help you with this particular dataset. But that's so much about how you'd be treated, even getting up to that, would be about class categorization.

Again, maybe just situating, because I'm not as familiar with the judicial biases, which are elsewhere. Just situating that in other cases, has there been any work in trying to measure income effects, and how that would work?

And whether you think that's a productive place for more granular efforts, even if they're less broad in scope than yours, to make headway in the next wave of studies on this? So sorry, some rambling thoughts, but really, really fascinating work. Thank you for sharing it.

Paul Novosad:

Cool, thank you. I guess I'll answer those now, since we're not too long at a backlog of questions. So the sample match process is, we had three native Indians on our team. We gave them each a list of 100 names, and asked them to classify the religion and gender of those names, or to indicate whether they couldn't classify them.

Then we compared what the algorithm did to their classifications. That's where the 97% came from. And just, a sort of funny story, one of [Elliott's 00:36:36] computer science students was the one who helped tune our neural network algorithm to these data.

Initially, she came back to Elliot and said, "I don't know what I'm doing, I think there's something wrong, because I've never seen a context with such a high classification accuracy." So just a funny, funny feature of this context, that turns out names are extremely predictive. I guess that's clear to people who know India and look at names.

I mean, certainly in the United States, maybe less so now, but for a long period of time, names are extremely indicative, extremely clear indications of gender. So it turns out, this is a easy process. It wasn't clear, when we started, that this was going to be an easy process, but for this particular case, it was.

Okay, so macro features mitigating bias? Yeah, interesting question. Judicial capacity is clearly one of the major challenges facing the Indian Judiciary. My gut instinct on it would be that, the more capacity constrained you are, the more likely you are to use identity characteristics to make rulings.

One could come up with alternate stories. So it's a testable hypothesis, which is interesting, it's one could look at more time constrained periods of year, when the backlog is higher, or courts that are more capacity constrained, or courts that are missing appointments. That's something that may be maybe interesting to look at.

I will say we didn't find ... We, of course, looked at this state by state, and we're like, "Oh, maybe there's a ton of bias in the north, and no bias in the south." There are no regional patterns of heterogeneity that leapt out at us. It was really a lot of zeros, in a lot of cases.

I think the intimacy of the relationship is a great point, but this is not a particularly intimate relationship. And the class distance goes away, goes at some distance here, as well. So I think that's a candid explanation. We're not going to be able to show that here, but it's something worth thinking about some more.

And just to the thinking a bunch about, differences in these other settings, if you think about the loan officer to borrower relationship, that's a cooperative relationship, in some sense, right? They both want that relationship to succeed, to say, it's a different context. And I think, in some of these settings, it maybe is easier to share information or between members of your own group.

Body language is easier to read, so you can maybe be more successful at arriving at cooperative outcomes. That's my internal mechanism for thinking about, Marcella Alsan's paper, that when you assign black patients to black doctors, she does this randomized experiment, you get better outcomes for those patients.

Certainly, bias is one possible interpretation, but I think there is potentially a communication relationship angle that can explain some of these cooperative outcomes, as well. So I think that's another useful thing to think about is, is this a cooperative, or an antagonist circumstance? Of course, many of the other papers in the US, in particular, are exactly in this judicial setting, and race seems to matter quite a lot.

Yeah, we haven't thought of a good way to think about class specifically yet. We're very interested in understanding how class affects your outcomes in the judicial system. So we thought we'd try to assign people incomes based on their names.

You could, you could and you could find that people with higher income names do, on average, get better results, but that was not ... The variation was too high. I think we're doing something really, really good there. Everything I showed you-`

Tariq Thachil:

Sorry to interrupt.

Paul Novosad:

Yeah.

Tariq Thachil:

But do you guys have any ... There's no information on the kinds of lawyers who are engaged in these cases, right?

Paul Novosad:

There is. We have ... we don't use it here. It's in the public data, I think the lawyer's name is available for about two-thirds of cases.

Tariq Thachil:

I mean, I this wouldn't be possible, maybe. I'm just trying to think, it's something about who is representing them, I mean, not for everybody, but that would be some source of information that would be relevant to their income profile and who they're able to afford. So I don't know, there may be something there to explore, even for a subset.

Paul Novosad:

Yeah, and that's something, just the idea of who gets what kind of representation is interesting in and of itself.

Tariq Thachil:

Yeah, yeah.

Paul Novosad:

And that's something that could be done here, that we haven't looked into at all.

Naveen Bharathi:

Also, do you have any information on investigating officers? The police officers?

Paul Novosad:

We are working on getting that for a couple of states from some police station datasets, that we can link up to our data. We don't have it yet. But tell me what you would do, if you had it?

Naveen Bharathi:

I thought that if the investigating officers are the same dominating caste, or a different region, maybe there is some bias there, yes, the bias towards them. Because a lot of it happens during the entire process, and evidence collection process, and how scientific-

Paul Novosad:

Yeah. Was the investigation done effectively, right?

Naveen Bharathi:

... Yeah.

Paul Novosad:

That can be a place where there's a lot of discretion, just room for thorough or not thorough investigations.

Naveen Bharathi:

Right. Most of the SC/ST atrocity cases, I mean, I know about that. Because most of the SC/ST atrocity cases, if the investigating officer comes from a dominant non-SC/ST background, then, [inaudible 00:42:31], they have it, but, I mean ... Yeah.

Paul Novosad:

That's interesting. Is there a study on that, or one we ...

Naveen Bharathi:

Yeah. So I'm not right away getting, but [inaudible 00:42:45]. There was, again, another question from my side. What do you think are the implications towards the increasing diversity in Indian lower judiciary?

Because there's always been a talk about, because there's no reservation or affirmative action, even in the lower judiciary. So what do you think the implications of this study would be, that it doesn't matter anyway?

Paul Novosad:

I know, right? Yeah, I wouldn't want to reach that implication from this study. Because like I said, this is one we studied ... Think of the universe of possible biases you can have. So we studied one that was prominent in other settings, and we didn't find it.

In fact, it's not that we found zero, right? We do find this religious bias during Ramadan, we do find there's some evidence that last name affinity seems to matter. I guess I think there's a lot of cases to be made that diversity in these settings is quite valuable, and ...

Naveen Bharathi:

Yeah.

Paul Novosad:

I would be Bayesian and say, "Okay, we have a zero estimate, but then, let's take it, the universal literature from other settings and other contexts, which show that this matters in India, as well." So I've been pretty uncomfortable drawing too many anti-diversity conclusions from this.

Naveen Bharathi:

Right. We have another question, from Matthew, I think you answered that question on, how is it different in different states? Like, the [inaudible 00:44:05]? Does it matter? Do you have any data, or it doesn't matter at all, the state?

Paul Novosad:

Yeah, we did this earlier on, so we haven't done like all the interactions in all the states, to see if the Ramadan effect is bigger in UP, or something like that. It's possible, but it's certainly not the case, that there is significant bias in any of the large states.

We did BIMARU, we did UP Bihar, I don't know if we did every single state. But we went in the places that seemed like you might find more of an effect, and didn't see much there.

Naveen Bharathi:

So another question which I had was, the judge who delivers the judgment is not the same from the beginning, right? Because they change a lot, [crosstalk 00:44:52], right?

Paul Novosad:

I don't know the exact number. It's about 50% of cases, it's the same, and 50%, It's different. This is relevant in thinking about the magnitude of the coefficient that we find here. So the first judge is randomized.

After that, it's more complicated, because whether your case passes to another judge is itself an outcome. So we're doing an ITT on the first judge that you're being assigned to, recognizing that in about half the cases, that's not the judge who's doing the ruling.

But we can cut the sample to the ones that get decided quickly. You may not want to cut the sample that way, because that is itself an outcome. I would say that fact should widen our standard errors, in the sense that we have a first stage that's less than one, but it doesn't suggest obvious bias, given the other tests we've done on this, of this bias in the coefficients.

Naveen Bharathi:

I have a small clarificatory question. I haven't done any analysis on different types of penal codes in different cases, like the sedition cases, as a better way of acquittals, or some types of criminal cases. Do you have any plans for that?

Paul Novosad:

We haven't done a lot on the specific case types. I think that's an interesting thing to look into. What's being been cool about putting up the public data is, people have a really focused interest, so that people interested only in sedition, and know way more about this topic than we do, and have way more insight in terms of, even, what are the questions that you should ask there?

Naveen Bharathi:

Yeah, thanks. Thanks, Paul. We have [Bumi 00:46:34]. He has a question. Bumi, Can you unmute yourself and ask the question?

Bumi? Yeah, he might dialed a little later. Then Kimberly had a question. "Would the nature of crime or the identity of the lawyers have an impact on this?" I think this has been asked already.

So Bumi had this question, let me read out. "Your data captured the variety of cases people [inaudible 00:47:10]. But there might be instances where we see In-Group Bias. For instance, we might expect female judges to be more sympathetic in cases of sexual violence than male judges. Do you have data on type of case, to be able to detect such a thing?" I think, [inaudible 00:47:23].

Paul Novosad:

Yeah, so we looked at that specifically, and I'm going to have to go to the paper, and see exactly what the point estimate was there. So there's a specific set of cases, which are classified as crimes against women.

I think this, the majority of these, were either sexual assaults and kidnappings. This is a little bit tricky, because the majority of people charged in these cases are men. Suppose that all people charged in these cases were men, then you can find out if those cases have different outcomes for being assigned to female versus male judges.

But you're not able to do the in-group effect. We do the in-group thing, because it turns out 10% of people charged in these cases, or I don't know the exact number, like 10 to 20%, are women. We don't find In-Group Bias effect in these cases.

And I do think, let me see if I can pull this up in real time, and tell you. I think there's an average effect where female judges rule in a specific way relative to male judges.

Naveen Bharathi:

Right.

Paul Novosad:

But it's not it's not something that we can say. It's more of a descriptive result than something that we can see. We don't know if this is the effect of female judges ruling differently on these types of cases, or if it's something different about the types of defendants who are in these cases.

Let me see. Oh, I don't even have it in that. I don't have it here, so I'm not going to be able to do it in real time. Yeah, we wanted. We were interested in that question.

And I'd say, because so few women are charged in these cases, we couldn't think of the right identification strategy to use there. I suppose we could interact the case type with the bias interaction. That might be interesting to see.

Naveen Bharathi:

Whether there's a particular ... Yeah.

Tariq Thachil:

Paul, there's also something that's just coming up in another question that I saw here. I mean, the concept of what's driving bias here? So there's the literature on judicial bias, where, at least my understanding of it, from the American context, it really is about a bias, and rooted in discrimination.

Then there's some of the literature you guys cite at the end, about In-Group Bias in the Indian context, where at least some of that is making arguments about, it's less bias or discrimination. And it's more, again, it's trust or cooperation.

Or there's some kind of reason for in-group favoritism that is rooted in something else, right? And definitely in political science and political economy, there's a lot of argument on that, which is not as much. It's often there's kind of instrumental reasons for it, rather than some kind of social identity-based discrimination.

I was wondering, how you guys are proving this? I mean, there's there's two different legs. There's the Indian context, which often tilts one way, but then there's the judicial bias.

Are you guys kind of agnostic on that? Or have you kind of approached the study with a particular understanding of what bias is, and you're what you're looking at here, and how that kind of informs what you guys are doing?

Paul Novosad:

Yeah, I think that's a great description of some of the differences in these contexts. I think, what you're saying, instrumental reasons, the idea was that mass incarceration in the United States is actually a mechanism of creating a pool of coerced labor. You're participating in some institution where incarcerating black men is serving the local economy in some way, right? And I don't know, I don't know.

I don't know that that would be, that that would make, if there's an Indian context where one would find instrumental reasons for a social group to be behaving in a certain way. So that's an interesting difference between the US and the Indian context.

The other context with a lot of papers in this space is the Israeli context, where they look at Arab and Israeli judges and defendants. And again, that is a context with a ... Every country has its conflict between in-groups and out-groups that have different historical roots.

I think this is a really interesting point. And it's totally reasonable to think of the history as driving some of the heterogeneity, and what you find across contexts.

Tariq Thachil:

But also, I think, why the reason that it may be relevant is not what you find. Because it doesn't require an exactly same kind of political economy of prisons or incarceration. But the idea of, when you're rooted in these two different literatures, how are you approaching what you're expecting, what the expectations are, and what you find, or what you don't find?

I mean, in some ways, to not find bias at this very stage of the process ... Does it mean, and what it might mean at the higher stages of the process, is it more or less compatible with certain understandings of why In-Group and out-group bias might manifest?

If it is really about more trust-based, cooperative-based, intimate relations, that if it were to happen in the judicial system, which node would it happen in? And if it's really just about a kind of antipathy towards an out-group that doesn't require that, then where would it happen?

So I do think some idea of the theorization of bias is relevant, for even interpreting and understanding how you're situating this within the Indian case, but also, within a particular judicial structure, and what it is. Because I sometimes think we just draw on this concept of an out-group effect, or an in-group effect, and a co-ethnic match.

I mean, when I see this in some of the literature, it's being invoked very differently. If we do find a premium, one way or the other, it's being read very differently as evidence of a particular kind of social relationship. That's very different from what, how you guys are reading it here, and that might be fine.

But I think being a little bit attentive to that might be helpful, even just with the existing set of results, as you guys are thinking forward about how to interpret that. Because they're almost psychologically completely different things.

Paul Novosad:

Tariq, I agree. I agree completely. I think this is moving into that competitive advantage of non-economists, which is a bit why our paper is kind of naive in the space.

We definitely didn't approach this with any theory in mind. We approached this with, "Oh, this bias occurs in absolutely every setting. So surely, we're going to find it in India."

Then we didn't, and we're like, "Okay, now, how do we think about this now?" So we're exactly in this space of trying to understand, what types of ... I really like the examples that you gave.

Yeah, so we're thinking about this. I'm sure that the conclusion we reached will not be as sophisticated as people in other disciplines can bring to this topic. But I think these are great questions.

Naveen Bharathi:

Yeah, thanks a lot, Paul. And I think I request everyone to go and look at the dataset. I am going to actually go and look at the dataset now, to really see, or at least for SC/ST atrocity cases, and a few other cases I'm really interested in. I think that's a great thing, when data is out in the open, and we can ask the question ourselves, with the data.

Thanks a lot for this presentation, Paul. We really enjoyed it. Hopefully, we can host you in person soon, whenever, at least, things get back to normal.

Next week, we have Professor Malini Sur, who will be giving a book talk on jungle passports, the same time, but in India it will be 10:30 p.m. Thanks a lot for everyone for attending, and happy Diwali once again.

And thanks for making time again. And yeah, thanks a lot. Thanks a lot, everyone.

Paul Novosad:

Thanks a lot. I really appreciate, really enjoyed the discussion.

Tariq Thachil:

Thank you so much, Paul. Happy Diwali, everyone.

Paul Novosad:

Happy Diwali, all.